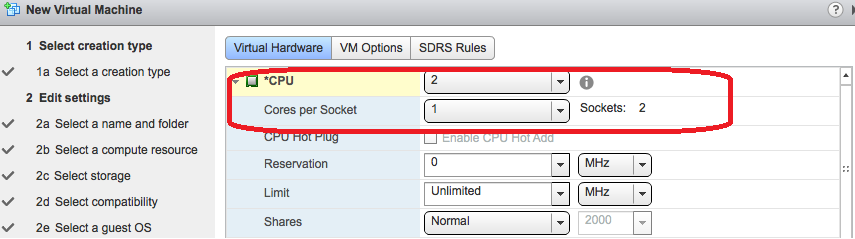

Quite often I am asked about the impact of selecting different Virtual Machine vCPU layouts via the VM CPU options. For example, what is the outcome of selecting 2 VM processors with 1 core versus 1 VM processor with 2 cores? Let's have a look at the options in question...

In the above example we are creating a dual processor VM and we could select 1 vCPU with 2 cores or 2 vCPU with one core. Does it make a difference which we choose?

No. At least in this case, specifically where we have less than 8 vCPU. More about this later.

Changing the core count is a useful way of overcoming potential guest application licensing issues, as discussed here: http://kb.vmware.com/kb/1010184. In terms of performance however, the hypervisor will schedule vCPU on underlying Physical CPU in exactly the same way in both cases, so there is no performance enhancement. But is this ALWAYS true?

Not necessarily. To complicate matters we have something called NUMA and vNUMA (Non Uniform Memory Access)

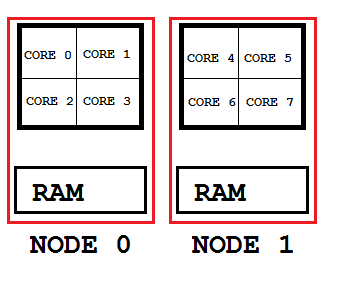

Modern CPU are ferociously powerful and have no problem number crunching vast quantities of instructions. Our problem is getting those instructions to the CPU from main memory, which is already busy servicing other CPUs. The memory bus has just become a bottleneck. To compensate, NUMA systems (such many from IBM) break down CPU and Memory into several blocks or nodes with each node having its own dedicated access to memory. Each NUMA node will have a certain number of cores and a certain amount of local memory.

The general upshot of this is that the VMKernel is NUMA aware and will attempt to schedule a VM within a single NUMA node as it is more efficient from a memory management perspective. It is therefore beneficial if your VM vCPU count AND memory allocation is able to fit into a single NUMA node.

If this is not feasible due to application requirements (the VM needs more CPU and memory than is contained in a single node) then not to fear, the hypervisor will split the VM across multiple nodes quite happily (referred to as a "wide VM support"). It is worth mentioning at this point that before VSphere 4.1 if a VM was larger than its node then NUMA was disregarded entirely and any advantages of NUMA lost.

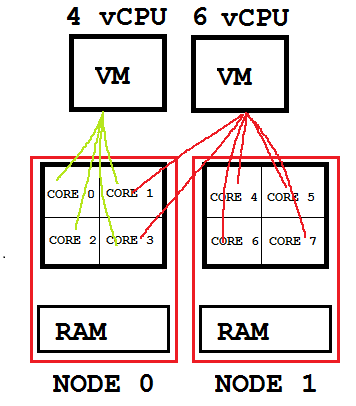

Assuming that your VM is the right size for the job, it will perform better operating as a "wide-vm" than if it were down-sized to fit within a single NUMA node. The image below compares a "local" to a "wide" VM. Both examples are acceptable although the VM with 4 vCPU will have greater memory locality than the 6 vCPU machine.

If this is not feasible due to application requirements (the VM needs more CPU and memory than is contained in a single node) then not to fear, the hypervisor will split the VM across multiple nodes quite happily (referred to as a "wide VM support"). It is worth mentioning at this point that before VSphere 4.1 if a VM was larger than its node then NUMA was disregarded entirely and any advantages of NUMA lost.

Assuming that your VM is the right size for the job, it will perform better operating as a "wide-vm" than if it were down-sized to fit within a single NUMA node. The image below compares a "local" to a "wide" VM. Both examples are acceptable although the VM with 4 vCPU will have greater memory locality than the 6 vCPU machine.

But what about our original question of vCPU layout? Well, now we have to consider vNUMA!

If a VM has 8 or more vCPU then vNUMA is enabled by default. vNUMA surfaces a version of the NUMA architecture up to the Guest OS and allows it to make scheduling decisions based upon the revealed topology. It is easier for vNUMA to present an appropriate topology to your VM if the vCPU layout is flat, that is to say, one core per vCPU (which is the default).

If on the other hand you MUST have multiple cores per vCPU (due to licensing) then it is best to mimic the physical NUMA layout of your host. Manually applied core to socket configurations will override vNUMA and may or may not match the physical ESXi NUMA topology thus resulting in degraded performance due to mismatching. This has been shown in tests to be as much as 31%.

If a VM has 8 or more vCPU then vNUMA is enabled by default. vNUMA surfaces a version of the NUMA architecture up to the Guest OS and allows it to make scheduling decisions based upon the revealed topology. It is easier for vNUMA to present an appropriate topology to your VM if the vCPU layout is flat, that is to say, one core per vCPU (which is the default).

If on the other hand you MUST have multiple cores per vCPU (due to licensing) then it is best to mimic the physical NUMA layout of your host. Manually applied core to socket configurations will override vNUMA and may or may not match the physical ESXi NUMA topology thus resulting in degraded performance due to mismatching. This has been shown in tests to be as much as 31%.

So to summarise!

1.) Try to size VM vCPU and memory as a divisor of a NUMA Node unless application demands rule this out.

2.) vNUMA is enabled by default in VMs that have 8 or more vCPU. It is better to keep the CPU layout of these VMs "flat".

3.) If this is not possible, design the processor and core count to match your host NUMA topology.

1.) Try to size VM vCPU and memory as a divisor of a NUMA Node unless application demands rule this out.

2.) vNUMA is enabled by default in VMs that have 8 or more vCPU. It is better to keep the CPU layout of these VMs "flat".

3.) If this is not possible, design the processor and core count to match your host NUMA topology.

This post has used "Broad Strokes" to cover a fairly complex subject and will not be appropriate in every situation. For further information related to this post, please see the below resources.

RSS Feed

RSS Feed